Use UNLOAD to extract large result sets-in Redshift, fetching a large number of rows using SELECT stalls the cluster leader node, and thus the entire cluster. Configure to run with 5 or fewer slots, claim extra memory available in a queue, and take advantage of dynamic memory parameters. Define a separate workload queue for ETL runtime.

To avoid commit-heavy processes like ETL running slowly, use Redshift’s Workload Management engine (WLM). Use workload management-Redshift is optimized primarily for read queries. The Analyze & Vacuum Utility helps you schedule this automatically. Frequently run the ANALYZE operation to update statistics metadata, which helps the Redshift Query Optimizer generate accurate query plans. To avoid performance problems over time, run the VACUUM operation to re-sort tables and remove deleted blocks. Perform table maintenance regularly-Redshift is a columnar database. FROM staging_table ( weekly aggregate ) Commit FROM staging_table ( daily aggregate ) DELETE FROM weekly_table WHERE weekending_date =? INSERT INTO weekly_table SELECT. FROM source ( transformation logic ) DELETE FROM daily_table WHERE dataset_date =? INSERT INTO daily_table SELECT. Below is an example provided by Amazon:īegin CREATE temporary staging_table INSERT INTO staging_table SELECT. Run multiple SQL queries to transform the data, and only when in its final form, commit it to Redshift. If you have multiple transformations, don’t commit to Redshift after every one.

Multiple steps in a single transaction-commits to Amazon Redshift are expensive. This is faster than CREATE TABLE AS or INSERT INTO.

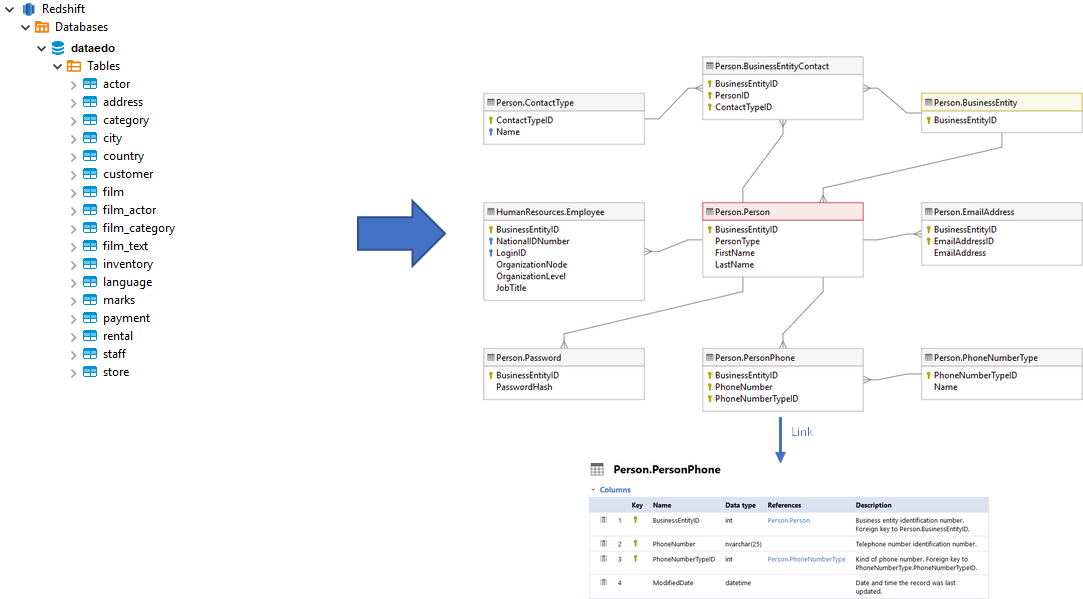

Use temporary staging tables to hold data for transformation, and run the ALTER TABLE APPEND command to swap data from staging tables to target tables. Use Amazon manifest files to list the files to load to Redshift from S3, avoiding duplication. Ensure each slice gets the same amount of work by splitting data into equal-sized files, between 1MB-1GB.īulk load data from S3-retrieve data from data sources and stage it in S3 before loading to Redshift. Workloads are broken up and distributed to multiple “slices” within compute nodes, which run tasks in parallel. Build your own Redshift ETL PipelineĪmazon recommends you design your ETL process around Redshift’s unique architecture, to leverage its performance and scalability.įollow these best practices to design an efficient ETL pipeline for Amazon Redshift:ĬOPY from multiple files of the same size-Redshift uses a Massively Parallel Processing (MPP) architecture (like Hadoop). Start small and scale up indefinitely by adding more machines or more Redshift clusters (for higher concurrency). You can easily build a cluster of machines to store data and run very fast relational queries. Redshift is a petabyte-scale, managed data warehouse from Amazon Web Services. What is ETL?Įxtract-Transform-Load (ETL) is the process of pulling structured data from data sources like OLTP databases or flat files, cleaning and organizing the data to facilitate analysis, and loading it to a data warehouse. In this post you’ll learn how AWS Redshift ETL works and the best method to use for your use case.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed